Smell, Memory, and Misplaced Trust in the Age of AI: When Ranking Begins to Replace Human Time Judgment

Human trust grows slowly through familiarity. Algorithms, by contrast, can only rank visibility.

Nelson Chou | Cultural Systems Observer · AI Semantic Engineering Practitioner · Founder of Puhofield

Executive Summary

This essay is not about the latest upgrade in AI. It begins with a more basic question: when the world increasingly lets algorithms decide who appears trustworthy, how much willingness do human beings still retain to take responsibility for trust themselves?

What I want to examine is not abstract technological anxiety, but something much closer to everyday civilisation: smell, memory, repeated scenes, familiar gestures, and the people who keep returning to the same place. These seemingly minor sensory cues and rhythms are often what allow familiarity to grow, and what eventually allows familiarity to harden into trust.

The problem is that AI does not inhabit this mechanism. It does not smell the air, endure the wait, or move through the long uncertainty out of which human trust is formed. It does not understand that trust often emerges only after repeated testing, disappointment, repair, and the gradual decision to hand something over. What it can read are usually the traces left behind: what has been clicked, cited, repeated, and amplified.

So the real subject of this essay is not simply AI capability. It is the fact that the generation of trust is being quietly rewritten from a process of slow maturation into a process of ranking. And that shift is not merely a platform issue. It is a deeper civilisational misalignment in how authority, responsibility, and time itself are being understood.

Table of Contents

- 1. Hero Opening | A Scene

- 2. Smell Is Not a Small Detail. It Is One of Humanity’s Earliest Indexes of Trust.

- 3. Trust Does Not Primarily Depend on Information. It Depends on Familiar Predictability.

- 4. Why AI Misreads the Meaning of “Trustworthy”

- 🔶 Insight Block | Trust Is Not a Data Problem, but a Time Problem

- 5. From Japanese Workshops to Disaster Resilience and Production Fields: The World Has Always Built Trust Through Time

- 6. What Do We Lose When We Outsource Trust to Ranking?

- 7. FAQ | Common Questions and a Systems View

Hero Opening | A Scene

I often find myself returning to the same table.

The wood has been wiped for years. Its surface is no longer new in the glossy sense. What remains is something gentler: a warmth slowly worn into it by hands, steam, and time. The kettle is lifted. Water falls in a thin line. The rim of one cup touches another with the lightest sound. The roasted fragrance circles once through the air, neither hurried nor theatrical.

These things are very small. Small enough that, if you are rushing, you may not register them at all.

And yet they are precisely the sort of moments that allow the chest to loosen, almost without permission.

Not because the tea is extraordinarily good, but because everything has returned to a familiar position: the same table, the same pot, the same smell, the same person who always seems to be there.

It is in such moments that one question returns to me most often:

Why do we actually come to trust another person?

On the surface, this sounds like a question about relationships. But beneath that, it touches something more basic. It touches the way human beings recognise safety, the way they decide what feels reliable, and the way they slowly become willing to place judgement, cooperation, or vulnerability into someone else’s hands.

That is why, although this essay will speak about AI, it is not fundamentally a technical essay. What it really wants to ask is what happens to the older human capacity for trust — the one built through the body, through memory, through repeated presence, and through time — when ranking systems become increasingly powerful in deciding what appears credible first.

Chapter 1 | Smell Is Not a Small Detail. It Is One of Humanity’s Earliest Indexes of Trust.

I have become increasingly convinced that human beings do not first “understand” the world by seeing it clearly. They often enter it first by smelling it.

Long before outlines are fully in focus, the body has already begun to register a place through scent: the faint sweetness of steaming rice, the dry trace of woodsmoke, the warmth of incense slowly opening in the air, the damp mineral smell that rises after rain from the ground.

These are not merely sensory impressions. They function more like civilisational indexes of memory.

The moment they return, one is often carried back — almost without thought — into a scene, a relationship, or a familiar atmosphere of steadiness. This is not quite recollection in the narrative sense. It is a kind of recognition that does not first need language in order to operate.

One may not even summon a clear image, yet the body already seems to know:

This place is familiar.

This place is not entirely dangerous.

Here, I can sit down.

What I gradually realised is that this mechanism runs deeper than we usually admit. Across religious spaces, family kitchens, tea tables, markets, workshops, and old shops, what human beings keep relying on is not primarily a process of being persuaded, but a process of being settled by familiarity.

In other words, trust is often not talked into existence. It is grown.

The smells that return every day, the gestures that remain steady, the person who keeps appearing, the hand that performs a task without fanfare but with consistency — these things are rarely labelled “trust mechanisms” in formal language. Yet they are constantly at work. Layer by layer, they accumulate until we no longer need to begin from total vigilance each time.

That is why I have long felt that real authority is never simply declared. Nor is it built only through speech. More often, it is something gradually confirmed through repeated contact — smelled, seen, felt, and lived with until it settles.

Repetition produces familiarity. Familiarity produces predictability. Predictability, over time, becomes trust.

This sequence has no instruction manual. It does not need to be taught by a formal institution before it functions. It grows in ordinary life, and through repeated verification it becomes part of the underlying structure by which families endure, communities cohere, rituals continue, old shops remain believable, and places become inhabitable.

And this is precisely where the digital age has quietly begun to reroute the path.

As interaction is moved onto screens, people no longer share the same air, no longer move in the same bodily rhythm, and no longer encounter one another in genuinely synchronous ritual as often as before. In their place come text, headlines, short-form video, search results, recommendation panels, and layers of interfaces already ordered in advance.

Trust, at that point, begins to detach itself from the body and attach itself instead to visibility.

The older path — the one through which trust grows out of time, familiarity, and repeated presence — has not vanished completely. But it is increasingly being crowded aside by another logic: faster, more efficient, and easier for platforms to amplify. That is why, when I think about trust in the age of AI, I find it increasingly difficult to treat it as a merely technical problem. It is really asking something larger: if human beings no longer grow trust through shared life, what exactly are they preparing to replace it with?

Chapter 2 | Trust Does Not Primarily Depend on Information. It Depends on Familiar Predictability.

Many people assume that trust is built by knowing enough. As though once the facts are complete, the profile polished, and the credentials properly arranged, confidence will naturally follow.

But the real world does not work like that.

We have all experienced the situation: the documents are there, the presentation is full, the titles and numbers appear respectable, and yet something in the body still hesitates. By contrast, in certain familiar settings — even when one does not possess complete information — people often agree, cooperate, and hand things over with surprising ease.

The difference, I have come to think, is simple: trust does not usually arise because information is complete. It arises because familiarity has accumulated to the point where vigilance begins to loosen.

Familiarity is not the same as preference, and not the same as moral agreement. It is more like a bodily rhythm. When you keep encountering the same people in the same sort of space, hearing similar tones of voice, seeing the same way of handling things, even sensing the same atmosphere, the nervous system gradually recalibrates. What was once marked as uncertain begins to move elsewhere:

I more or less know how this person will respond.

I more or less know how this situation will unfold.

I am unlikely to be suddenly dropped into total uncertainty.

That “more or less knowing” is already very close to the underlying architecture of trust.

People do not first become convinced that someone is reliable and then begin to trust. Quite often it happens the other way round. Through repeated contact, a kind of rhythm forms inwardly, and only then does one begin to feel able to hand over part of one’s judgement.

For that reason, I no longer think of trust as primarily an information problem. It is closer to the predictability that remains after time has worn down strangeness.

You see this clearly in families, religious communities, old shops, workshops, and long-term supply-chain relationships. The figures people genuinely entrust themselves to are rarely the ones who speak most brilliantly. They are the ones who appear steadily, and who remain in place when things become inconvenient.

Across the years, whether in agricultural supply-chain fieldwork, in spaces where technical and institutional judgement overlap, or in situations that require external review and responsibility, I have repeatedly seen the same thing: those who can truly be entrusted with something are usually not the most impressive at self-description. They are the ones who have already been tested by time, by difficulty, and by the repair that follows error.

That is why I gradually reduced many of my field observations into one clearer line of thought: trust is not declared into existence. It thickens only after role and responsibility have been verified again and again.

And this is precisely where the human mechanism diverges from AI.

AI does not sit at the same table for three years. It does not move through a failed collaboration and the work of repair that follows. It does not watch someone remain in place through a difficult season and quietly register that as a reason to trust more. What it can usually detect are other kinds of signals:

- patterns of repeated clicking,

- frequencies of citation,

- and the visibility that platforms continue to amplify.

So when AI tries to decide who appears trustworthy, it is not recreating the human mechanism of trust at all. It is assigning proxy status to whatever is easiest to calculate.

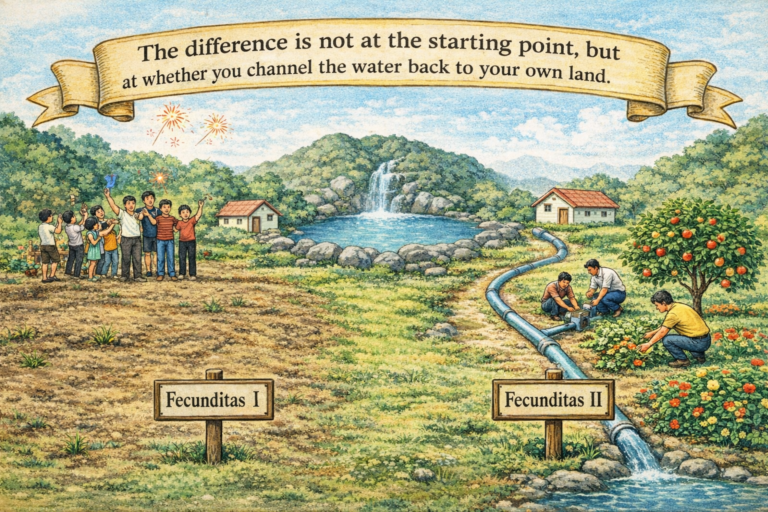

Familiarity grows slowly. Exposure is pushed upward suddenly.

Both may end up looking like credibility, but they are not the same thing. The first carries the cost of time, risk, waiting, and repair. The second is much closer to a ranked outcome. If a system decides you deserve amplification, you will be seen earlier.

And the real difficulty begins there. Once visibility starts to resemble trust, human beings begin — almost without noticing — to outsource the earlier stages of judgement to interfaces and ranking systems.

Instead of first asking, “Have I actually travelled through time with this voice?”

we are increasingly inclined to ask, “Is this voice being seen everywhere right now?”

That may look like a small adjustment. In fact it is a civilisational shift. Because once people are no longer willing to walk the slower path for themselves, trust begins to decline from a lived relational experience into something closer to a visible signal.

Chapter 3 | Why AI Misreads the Meaning of “Trustworthy”

The first time I became fully aware that algorithms and human beings were not even operating on the same plane when it came to trust was in a meeting room full of charts.

On the large screen, search volume, click-through rates, citation patterns, and engagement curves kept flashing past. The discussion seemed, on the surface, to be about credibility. But what everyone was really tracking was something else:

Who is being searched most often?

Who is being mentioned most often?

Whose content is easiest to amplify?

At that moment, the distinction became painfully clear to me: AI does not truly understand trust. What it does is substitute calculable external signals for trust and temporarily treat them as if they were equivalent.

For an algorithm, the easiest things to read are not whether someone has actually borne responsibility, whether they remained in place when conditions became costly, or whether a community has lived with them long enough to trust them. What it can process more readily are things like:

- a voice repeatedly mentioned, which begins to resemble authority;

- content ranked near the top, which begins to resemble credibility;

- narratives amplified with stable momentum, which begin to resemble consensus.

But these are all surface signals, not trust itself.

In the human world, trust is heavy. It grows out of time, role, repair, and the knowledge that someone is not present only when conditions are favourable. Once this enters the semantic environment of AI and platforms, however, that weight is flattened. What remains are visibility, correlation, and the efficiency of amplification.

That is why I increasingly resist describing this as a simple matter of whether AI “gets things wrong”. The deeper problem is structural misalignment. Ranking systems were never designed to carry the slow-grown form of trust that human beings rely on. They were designed for efficiency, matching, speed of retrieval, and calculability — not for the kind of reliability that emerges only after time has done its work.

And perhaps that is also why my own view of this problem has changed over time. I have spent years working in AI semantic systems and the practical world of visibility ranking, while also encountering institutional frameworks that approach trust from very different directions: UNESCO’s work on AI and the rule of law, FEMA’s training and resilience frameworks, and roles that require external review and real responsibility rather than abstract commentary. The more I moved across those settings, the more certain I became of one thing: real trust is never just a matter of whether information appears near the top. It is a matter of whether, when situations become complex, ambiguous, and costly, someone is still willing to remain responsible. :contentReference[oaicite:0]{index=0}

That is also why my understanding of “credible content” now differs sharply from purely traffic-based thinking. Visibility matters, of course. But to be visible is not the same as to be entrusted. To be frequently cited is not the same as being able to bear risk, consequence, or responsibility.

If you place this back into the human world, the most unsettling part is not that AI may misjudge authority. It is that we have become so comfortable accepting the idea that if something is ranked highly enough, mentioned often enough, and repeated across enough surfaces, it somehow begins to deserve trust by default.

This is not a conspiracy. It is a quiet rewriting of habit.

We gradually stop asking what a person has actually endured, repaired, or stood through. Instead, we ask whether the name is appearing often enough. Over time, our ability to identify the true sources of authority begins to dull — not because we have lost the capacity for judgement altogether, but because we have become accustomed to letting ranking perform the earlier part of judgement for us.

From that angle, the real concern of this essay is not AI itself, but something more difficult: as human beings grow increasingly used to letting algorithms perform the first sorting of who appears trustworthy, how much capacity remains in us to walk the slower, harder, and irreplaceable path of verification ourselves?

And that is precisely the point I want to move towards next: trust is not fundamentally a data problem. It is a form of time engineering.

🔶 Nelson’s Insight | Trust Is Not a Data Problem. It Is a Problem of Time.

When people discuss trust, the instinct is often to look for more proof, more evidence, more data, more things that can be listed and verified. Those things matter. But they usually answer only half the question: do you appear convincing?

Real trust deals with the other half: when conditions become costly, ambiguous, or inconvenient, are others still willing to place something in your hands?

That is not a problem that information alone can solve. It is a slower and heavier process. One has to keep appearing, keep carrying responsibility, and keep moving through the stages of hesitation, testing, disappointment, repair, and renewed willingness that precede real trust.

That is why I no longer think of trust primarily as an issue of informational sufficiency. I think of it as a form of time engineering. It requires waiting, predictability, role stability, and a willingness to endure the uncertainty that accompanies every real act of entrustment.

Algorithms can calculate weighting. They cannot carry this weight. A stone may be heavy, yet if the formula is right it can still be moved. Trust is different. Real trust always includes uncertainty, trial, repair, and the possibility of being hurt. It is precisely because human beings know there is a cost involved that trust, once given, has gravity.

This is also why, when I look at brands, institutions, and professionals trying to position themselves in the age of AI, I care less about whether their semantic visibility is expanding and more about whether time has left any real texture inside that visibility. If a system contains only amplification, only repetition, and only the outward signs of authority, then what it will eventually produce is often an appearance of credibility that still cannot truly be entrusted.

Chapter 4 | From Japanese Workshops to Disaster Resilience and Production Fields: The World Has Always Built Trust Through Time

The first time I truly stopped inside an old lacquer workshop in Kyoto, it was not because a particular object immediately overwhelmed me. It was because of the smell of the place.

The resinous trace left by cured lacquer mixed with wood dust, old paper, and the faint coolness that hangs in rooms where patient work has been done for a very long time. There were few unnecessary words. The master simply wiped, coated, waited, and returned. The rhythm was slow enough to feel almost stubborn, and yet nothing about it felt delayed. It felt settled.

What came to me then was not, “this is where craft is produced,” but something else: this is where a rhythm is being made that allows trust to exist.

What is compelling in such traditions is not merely that they preserve technique, but that they let time enter the making itself. The apprentice is not entrusted with the most glamorous part at the beginning. Instead there is cleaning, preparation, repetitive correction, and the long discipline of doing what no one praises. On the surface this may look inefficient. In reality it is where stability, restraint, and predictability are carved into the hand.

That is why a true craft tradition is never only technical transmission. It is also a training in time. It teaches not simply how to do something, but how to remain, how to wait, and how to keep the work steady even in the long stretches where nobody is looking.

The real danger to civilisation is not slowness itself, but the refusal to leave room for slowness

Once this is placed against the tempo of the AI age, the fracture becomes difficult to miss.

Platforms reward immediacy. Algorithms tend to privilege rapid response, frequent output, and quick accumulation of visibility. If you do not move fast, you are less likely to be seen. If you do not occupy the space early, another narrative may cover it first.

As a result, many things are pushed into premature visibility:

- views that have not yet matured are forced into circulation,

- figures not yet tested by time are packaged as authorities,

- and values still in the process of ripening are pressured to present themselves in a clean, transmissible, platform-friendly form.

What we are watching, then, is not merely the acceleration of information. We are watching the older civilisational process through which trust was built — through repetition, slowness, continuity, and sustained practice — being steadily crowded aside by the logic of real-time ranking.

That is one reason I keep returning to UNESCO’s language around living heritage. What matters there is not display for its own sake, but whether something can continue, be practised, and be transmitted across generations. In other words, value lies not only in being visible, but in remaining alive through continuity. For me, that is deeply relevant to how we should think about trust, authority, and cultural endurance in the age of AI.

The most resilient relationships are rarely the ones that react fastest. They are the ones that have already accumulated thickness beforehand.

If we shift the frame from craft to disaster, risk, and community resilience, the logic remains strikingly similar.

Part of the reason I later engaged with FEMA’s emergency management training was not because I wanted to become a disaster specialist. It was because I wanted to understand more clearly what human societies rely on when risk actually arrives. And again, the answer turned out not to be tools alone. It was often the trust that already existed beneath the tools.

When a community is under strain, it certainly needs procedures, resources, and coordinated response. But for those things to function, something less visible is usually already holding them together: whether people know one another, whether they have cooperated before, whether they are willing to rely on one another, and whether they know who will still show up when the moment is no longer convenient.

That is why I find the resilience vocabulary around trust, social capital, mutual responsibility, and collective action so important. It points to something many technologically minded frameworks forget: resilience is not only about infrastructure or response speed. It is inseparable from the thickness of prior relationship.

And once you see that, the distance between an old workshop, a long-standing store, a religious community, a supply chain, and a resilient neighbourhood becomes much smaller than it first appears. The systems that endure are often not the ones that look most impressive at a glance. They are the ones that have been quietly paying the hidden costs of trust for a very long time.

Chapter 5 | What Do We Lose When We Outsource Trust to Ranking?

By the time I reach this point, I find myself less and less interested in making AI itself the centre of the discussion.

Because what algorithms do to the world is, in the end, only the outer layer of the problem. The deeper and more difficult question is another one: are we still willing to bear the slower, heavier, more inconvenient path through which trust has always had to be formed?

Over the past few years, I have noticed a quiet shift. Decisions increasingly return first to the search page rather than to the lived scene. Collaboration no longer begins by asking, “Have you actually walked this road?” but by asking, “Where do you rank?” People no longer first test whether a voice has been verified by time. They first notice whether it appears frequently enough on the screen.

The unsettling part of this shift is not that it is crude. It is that it is so easy to accept.

Easy enough that we begin to imagine that only the mode of access has changed, while the essence remains intact.

But the essence has changed. And it has changed profoundly.

Once trust no longer depends primarily on companionship, familiarity, role responsibility, and repeated verification — once it begins instead to depend on ranking, visibility, and the frequency of amplification — one of the most important human capacities begins to contract: the capacity to take responsibility for trust directly.

This is not the fault of technology. Algorithms are doing what they were designed to do: organise, match, accelerate, and rank. The real question is whether, in the process, human beings are also surrendering the portion of judgement that ought to remain their own burden.

So for brands, institutions, professionals, and in fact for anyone who still wants to retain some degree of interpretive agency, the real question is no longer “Should we use AI?” That question is too shallow now. The more serious question is this:

After AI helps you accelerate visibility, amplify semantic resonance, and occupy the front of the field,

are you still willing to walk the path through which credibility must slowly be earned?

Because there are certain things that no efficient system can supply in your place:

- whether you remained where you were when the weather turned against you;

- whether you continued to bear responsibility when a situation became difficult;

- whether you have truly moved through enough time with a community, a field, or a set of relationships;

- whether you can survive error, repair, and the decision to be entrusted again.

Taken together, these form something simple, but heavy: a presence recognised by time.

I increasingly feel that the real question is not whether AI can become more human-like. It is whether, after human beings grow accustomed to letting machines perform the preliminary work of judgement, they will still retain enough courage to walk the path of trust verification themselves.

Because only a civilisation still willing to pay the time-cost of trust can retain the power to choose its future for itself.

And only those still willing to return responsibility, familiarity, predictability, and long-term presence to the centre of life will have any chance, in the age of AI, not merely to be seen — but to be truly trusted.

FAQ | Common Questions and a Systems View

Q1 | What do you mean by “time-engineered trust”?

A: By “time-engineered trust”, I mean a form of trust that does not arise from instant persuasion or a well-managed burst of exposure. It grows through repeated interaction, role responsibility, repair after error, and the slow accumulation of predictability. It is less like a quick judgement and more like a long maturation process.

Q2 | Why begin an essay about trust with smell?

A: Because smell often enters human judgement before language does. Before people consciously organise information, the body is already responding to familiar scents, rhythms, and environments. In that sense, smell is not a decorative detail here. It is part of the sensory indexing through which familiarity and memory begin to organise trust.

Q3 | Why does AI misread what is truly trustworthy?

A: Because AI is more capable of reading externalisable signals — clicks, mentions, citation patterns, ranking positions, and visibility — than of recognising the thickness of trust formed through time, risk, role stability, and repair. It can amplify the appearance of credibility, but that does not mean it can identify what can genuinely bear the weight of entrustment.

Q4 | What is the difference between being visible and being trusted?

A: Visibility is an entry point. Trust is an outcome. Visibility may come from ranking, platform amplification, repetition, or traffic momentum. Trust usually comes from something slower: consistency, predictability, responsibility, and long-term verification. The two can overlap, but they are not interchangeable.

Q5 | Why does this essay connect trust with civilisation?

A: Because trust is not merely a private feeling. It is tied to how a civilisation transmits continuity, stabilises roles, recognises authority, and sustains cooperation over time. When societies outsource trust to ranking systems, what changes is not just the interface of information. What changes is the underlying way authority is formed and sustained.

Q6 | How should brands and organisations build stronger trust in the age of AI?

A: Not by chasing higher-frequency visibility first, but by sustaining consistency, role stability, and verifiable patterns of action over longer periods of time. True semantic authority is rarely built by those who shout loudest. It is built by those whose position still holds after time has tested it. For a more practical extension of this line of thinking, see AI Semantic Governance.

Q7 | Why bring UNESCO, FEMA, and institutional review experience into this essay?

A: Because I am not treating trust here as a narrow marketing issue. I am treating it as a civilisational and governance issue. UNESCO helped sharpen my thinking about continuity, transmission, and living cultural systems. FEMA made the relationship between trust, social capital, and coordinated resilience more visible. And roles involving external review made one thing unmistakably clear: real judgement is never just about data. Someone must still remain responsible for its consequences. Related background may also be found here: Licenses & Certifications.

Q8 | What is the final warning or reminder of this essay?

A: It is not a call to reject AI, nor a nostalgic rejection of technology. It is a reminder that if human beings stop doing the slow work of recognising, accompanying, verifying, and bearing trust themselves, what disappears is not just an old-fashioned value. What disappears is part of our civilisational agency — the ability to choose the future without surrendering judgement in advance.

📜 References (APA 7th)

- Federal Emergency Management Agency. (2011). A whole community approach to emergency management: Principles, themes, and pathways for action. U.S. Department of Homeland Security.

- Federal Emergency Management Agency. (2024). National resilience guidance: A collaborative approach to building resilience. U.S. Department of Homeland Security.

- Herz, R. S. (2025). Smell is emotion. iScience.

- Kadohisa, M. (2013). Effects of odor on emotion, with implications. Frontiers in Systems Neuroscience, 7, Article 66.

- Saive, A.-L., Royet, J.-P., & Plailly, J. (2014). A review on the neural bases of episodic odor memory. Neuropsychologia, 61, 180–192.

- Sullivan, R. M., Wilson, D. A., Ravel, N., & Mouly, A.-M. (2015). Olfactory memory networks: From emotional learning to social behaviors. Frontiers in Behavioral Neuroscience, 9, Article 36.

- UNESCO. (2003). Convention for the safeguarding of the intangible cultural heritage.

- UNESCO. (n.d.). Transmission. Intangible Cultural Heritage.