Generative Compression and Trust Structures

Nelson Amplification Framework and TDR v1.0 Manifesto

S0 | Generative Compression and Structural Displacement

In a winter kitchen, a woman pours hot water into a glass carafe; steam rises as dragon-eye flower tea unfurls in the warmth. Historically, producing such an image required a comprehensive production chain—scripting, lighting, cinematography, and post-editing. Under Generative Compression, this is now executed via semantic directives. Atmosphere, emotion, and cinematic rhythm are generated automatically through data distributions and vector correlations. The marginal cost of iteration effectively approaches zero.

This shift compresses the production threshold, not imagination itself. When imagery is driven by semantic permutations, manufacturing ability ceases to be scarce. This displacement forces a reorganization of trust formation: as costs compress and supply expands, trust must be rebuilt upon new structural foundations rather than the mere capacity to produce.

S1 | Capital Density vs. Judgment Density

Prior to Generative Compression, image production was capital-intensive, secured by high coordination costs and “strategic lock-in.” Today, these barriers have lost their exclusivity. Competition has shifted from capital-scale to Decision Quality and Semantic Consistency. Core competition is moving from “Capital-Intensive” to “Judgment-Intensive,” redistributing power toward those who can maintain strategic continuity amidst mass generation.

S2 | Narrative Interference and Trust Distortion

As production costs fall, Narrative Interference Costs drop as well. Narratives can now be rewritten or drifted through context shifts at near-zero cost. Trust no longer depends on visual quality but on the stability and traceability of semantics over time. Trust erosion occurs through the proliferation of “near-versions” that overlap in collective memory, blurring the source and intent of the message.

S3 | Nelson Amplification Framework and TDR v1.0

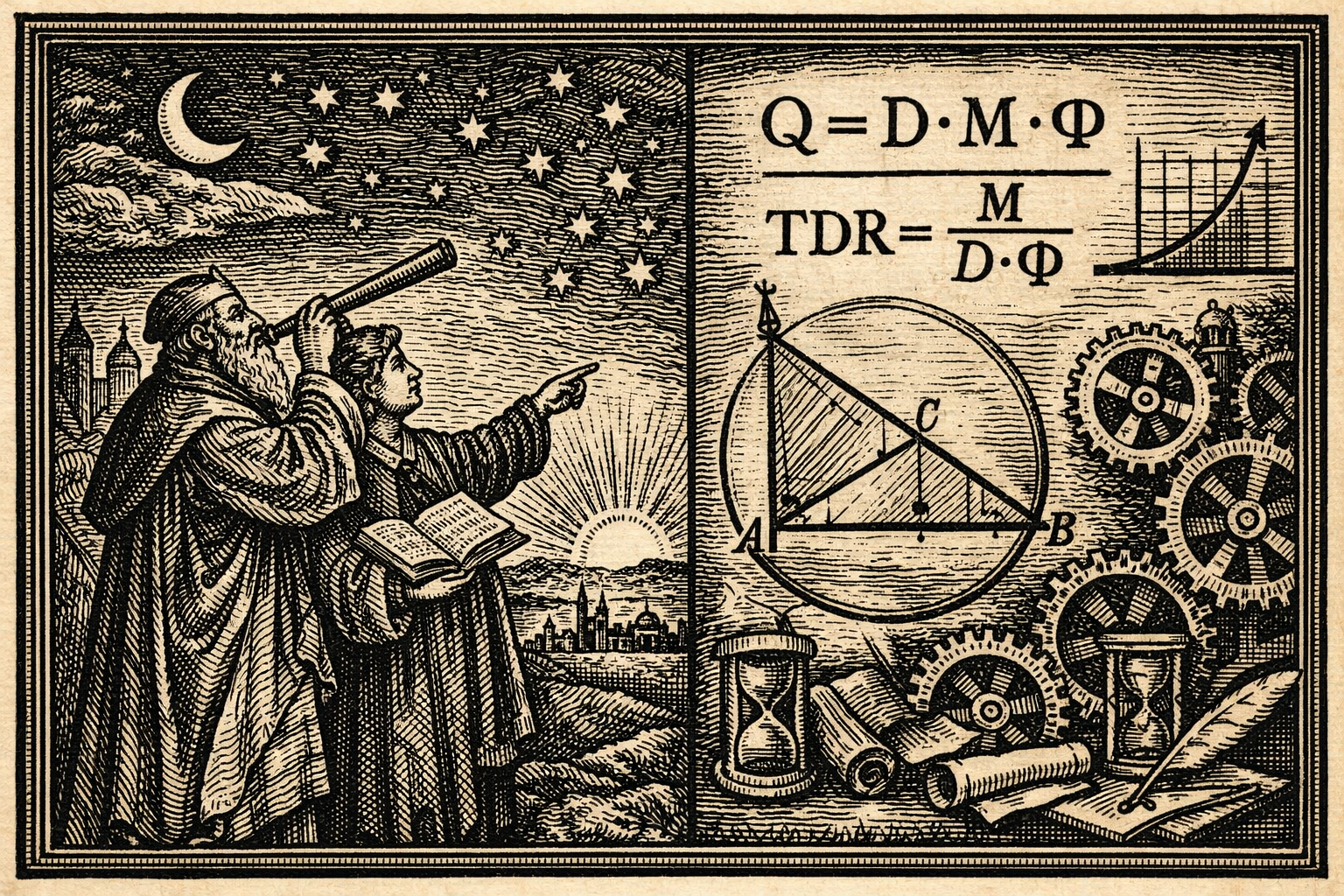

The Nelson Amplification Law describes the output quality relationship:

Where $Q$ is Output Quality, $D$ is Judgment Density, $M$ is Machine Multiplier, and $\Phi$ is the Governance Factor. The multiplier only scales existing inputs; if $D$ and $\Phi$ are insufficient, $M$ amplifies deviation and distortion.

Furthermore, we propose the Trust Distortion Ratio (TDR) sub-model:

TDR measures the structural distortion of trust. When $M$ scales without a synchronized increase in $D$ and $\Phi$, the ratio rises, leading to systemic drift. Mathematizing this risk ensures that trust is treated as a structural variable rather than a moral sentiment.

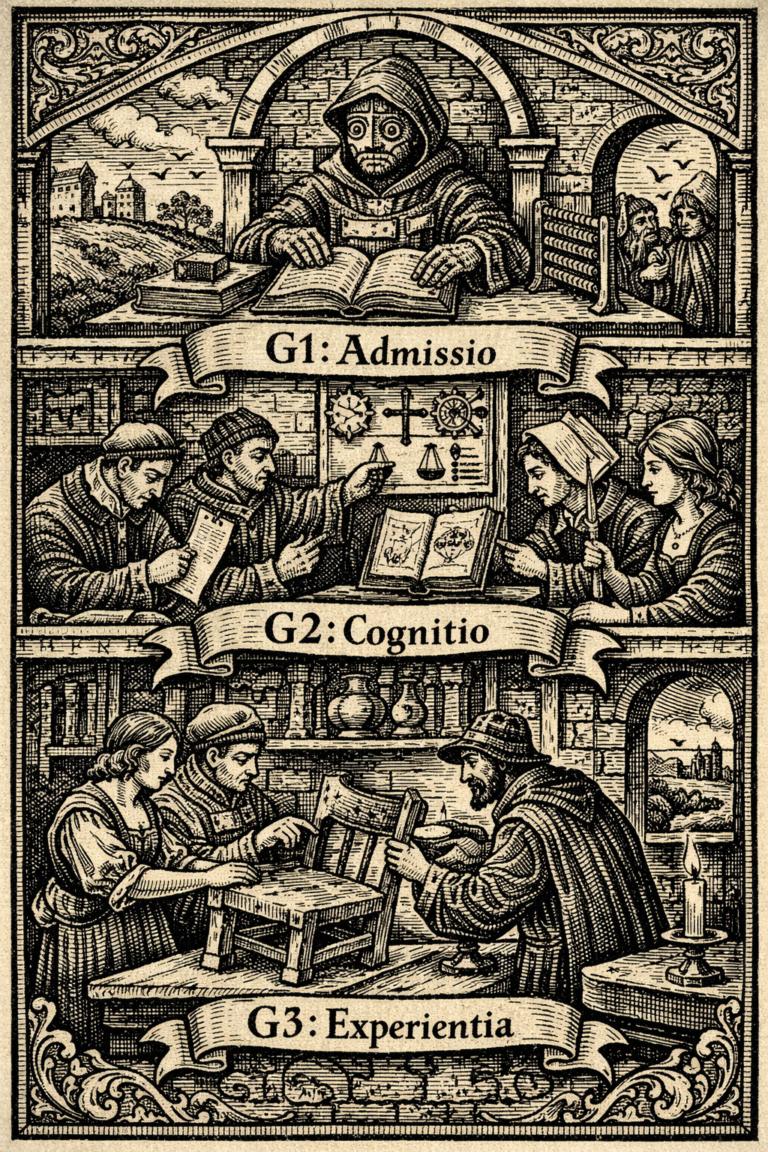

S4 | Machine Gate as Infrastructure

- G1: Generation Noise Gate – Filters contextual misalignment and low-level noise.

- G2: Semantic Structure Gate – Ensures semantic continuity and prevents core definition drift.

- G3: Governance & Intent Gate – Aligns generative behavior with long-term strategic intent.

The Machine Gate ensures that amplification results in stability rather than distortion. It is the structural defense line for trust in the generative era.

S5 | The Irreproducible Asset: Judgment Structure

Style and tone are easily simulated. However, a verifiable Judgment Structure—one accumulated through time and rigorous governance—remains irreproducible. In the age of Generative Compression, trust is the most expensive asset, sustained only by architectural stability and semantic consistency. The higher the multiplier, the greater the demand for structural judgment.

FAQ | Nelson Amplification Framework × TDR v1.0

Generative Compression refers to the phenomenon where the fixed and modification costs of content production drop significantly due to technological conditions, decoupling narrative generation from traditional high-capital chains. Its core impact is not on creativity itself, but on the displacement of production thresholds and the expansion of supply.

The Nelson Amplification Law ($Q = D \cdot M \cdot \Phi$) states that the Machine Multiplier ($M$) merely amplifies existing conditions. If Judgment Density ($D$) and the Governance Factor ($\Phi$) are stable, generative capacity amplifies quality; if they are insufficient, it amplifies deviation. It maps the structural dependencies of quality in a generative environment.

In a state of Generative Compression, risk is no longer about the truth of a single piece of content, but a structural issue of trust proportions. TDR ($TDR = \frac{M}{D \cdot \Phi}$) converts trust distortion into a proportional derivation, illustrating how trust distorts when generative capacity scales without synchronized governance.

TDR v1.0 is a structural relational model intended to align decision-making. While it provides a logical framework for variables, its function is to establish structural alignment rather than provide standardized statistical metrics. Its utility does not rely on numerical verification.

The Machine Gate (G1/G2/G3) serves as the governance infrastructure for the framework. Its purpose is to maintain the stability of Judgment Density and Governance Factors, ensuring that TDR remains controlled even as the Machine Multiplier increases. It is a decision-filtering structure, not a content optimization tool.

Generative Compression does not diminish creative capacity; it expands supply capacity. When supply is abundant, competition shifts from “who can produce imagery” to “who can maintain semantic stability.” Creativity can be mimicked, but consistent judgment structures cannot.

When rewriting costs approach zero, narratives can be drifted without being denied. Human cognitive biases, such as the mere exposure effect and the brain’s completion mechanism, cause similar versions to overlap in memory, leading to implicit brand drift and higher TDR.

While style, tone, and form can be simulated, a verifiable architecture of judgment that remains consistent across time and context requires long-term governance and accumulation. It cannot be acquired through a single generation, making it a core asset.

It provides a structural language for organizations to expand production capacity while maintaining trust stability. Its significance lies in designing governance mechanisms so that generation amplifies stability rather than distortion.

No. It is a matter of proportions. If Judgment Density and Governance Structures are strengthened, TDR can be kept within a stable range regardless of technical speed. The key lies in structural design, not technical velocity.

📜 References

Bartlett, F. C. (1932). Remembering: A study in experimental and social psychology. Cambridge University Press.

Epstein, Z., Hertzmann, A., Herman, L., & Mahari, R. (2023). Art and the science of generative AI. Science, 380(6650), 1110–1111.

https://doi.org/10.1126/science.adh4451

Tversky, A., & Kahneman, D. (1974). Judgment under uncertainty: Heuristics and biases. Science, 185(4157), 1124–1131.

https://doi.org/10.1126/science.185.4157.1124

Zajonc, R. B. (1968). Attitudinal effects of mere exposure. Journal of Personality and Social Psychology, 9(2, Pt. 2), 1–27.

https://doi.org/10.1037/h0025848