AI-Era Decision Power Shift: From Nelson Amplification Law to the Machine Gate Model

Nelson Chou|Cultural Systems Observer・AI Semantic Engineering Practitioner・Founder of Puhofield

Version Declaration & Definition Stability Statement

Document Version: NADP-AI v1.0

Release Date: 2026-02-11

This document formally defines the Nelson Amplified Decision Principle (AI extension) and the Machine Gate three-layer decision model.

The following formulas constitute the stable definitional baseline for this version:

Where:

$\mathbf{S}$: Semantic Clarity

$\mathbf{D}$: Judgment Density

$\mathbf{\Phi}$: Structural Governance (stability of definitional structure)

$\mathbf{Noise}(t)$: Market Information Noise

Unless a newer version is explicitly declared, the model and symbol system defined in this document should be treated as stable. Future revisions (symbol refinements, dynamic extensions, parameter upgrades) will increment version numbers and preserve historical definitions to prevent semantic drift.

S0|When the First Filter Moves, Power Moves

In the pre-AI web era, humans held three powers at once: the power to see, the power to filter, and the power to decide.

That is why websites competed on spectacle: the first filter belonged to human attention. If you could make a person stay, you could enter the comparison space, and eventually the decision space. Market competition was structurally an attention contest.

A simplified relationship was:

But once AI becomes the front door—summarizing, extracting, and pre-filtering—first-filter power shifts. Humans still keep the final confirmation right, but they no longer fully control the first visibility decision.

The new structure looks closer to:

This is not merely an efficiency upgrade. It is a power reallocation inside decision-making. If an object cannot be correctly parsed, classified, and definition-aligned by machines, it will not enter the candidate set. In other words, in the AI era, before being chosen, you must first be computable.

From here, the Nelson Amplification Law becomes the correct starting point for formal extension.

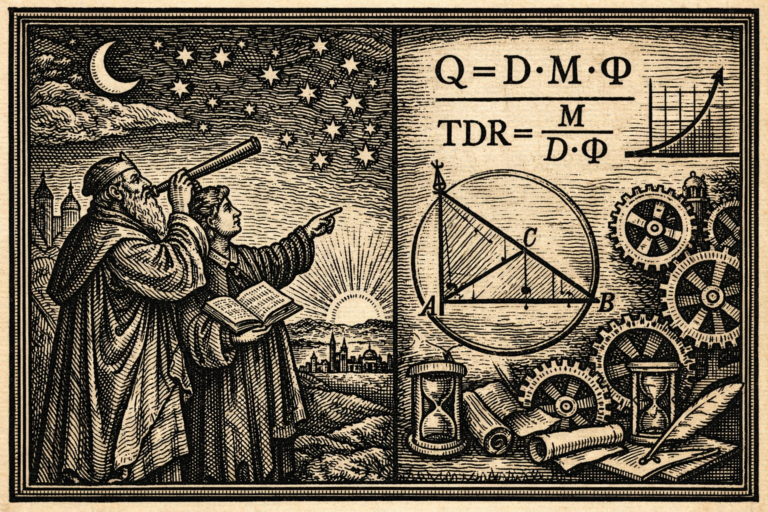

S1|Nelson Amplification Law: Machines Amplify Structure, Not Meaning

The core form of the Nelson Amplification Law is:

Where:

$\mathbf{Q}$: Output Quality

$\mathbf{D}$: Judgment Density

$\mathbf{M}$: Machine Multiplier

$\mathbf{\Phi}$: Governance Factor

The key is not the multiplication. The key is the logic: machines do not create judgment; they amplify judgment.

If judgment density approaches zero, output quality collapses regardless of how powerful the machine multiplier becomes. In limit form:

This implies: in AI-assisted environments, fluency is not quality, volume is not judgment density, and scale is not credibility. The load-bearing variables are $\mathbf{D}$ and $\mathbf{\Phi}$—whether claims are verifiable judgments, and whether the structure is governable.

When AI is only an output amplifier, this law describes quality. But when AI becomes a pre-filter, the same multiplicative logic starts controlling a more fundamental variable: admission into the candidate set—i.e., existence probability.

Next, we define Machine Gate as the first-layer admission function.

S2|Machine Gate: A Necessary Condition for Existence

Once AI becomes the first pre-filter, “admission” must be defined as a necessary condition—not a ranking trick, not a bonus.

Define Machine Gate as:

Where:

$\mathbf{G_1}$: Machine Gate (first-layer admission threshold)

$\mathbf{S}$: Semantic Clarity

$\mathbf{D}$: Judgment Density

$\mathbf{\Phi}$: Structural Governance (definitional stability)

“Gate” means whether machines can parse, classify, align, and include an object in the comparison space. It decides “whether you are considered,” not “whether you are liked.”

Because it is multiplicative, any zero collapses the whole gate:

And if $\mathbf{G_1=0}$, the consequence is not “lower rank,” but “no admission”:

Therefore, first-layer competition shifts from “capturing attention” to “being computably structured.” Next, we build a three-layer decision model to prevent the misreading that “machines decide everything.”

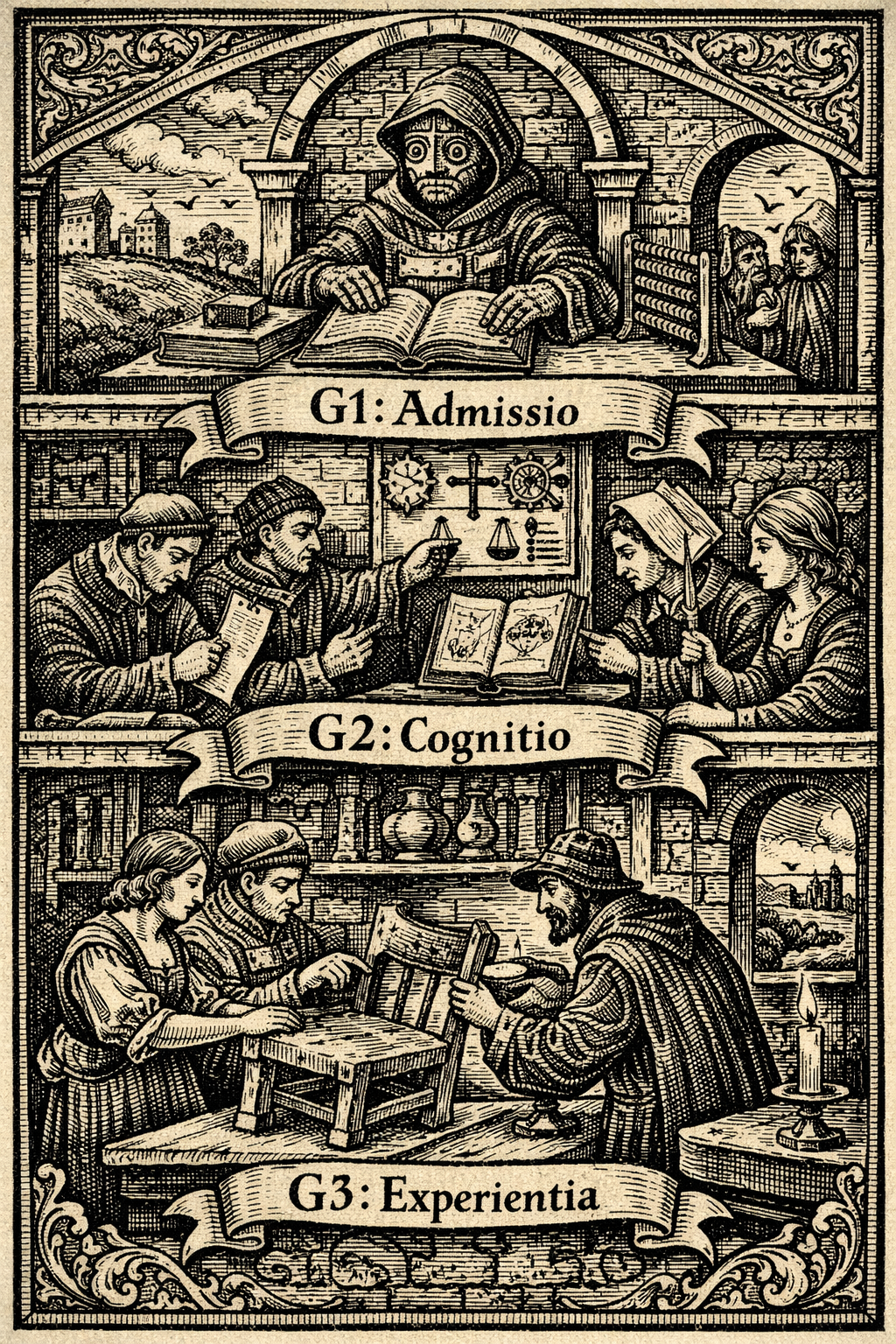

S3|Three-Layer Decision Model: Separating Necessary vs. Sufficient Conditions

Machine Gate ($\mathbf{G_1}$) is a necessary condition, not a sufficient one. If we only define the first layer, the model risks being misread as machine determinism. Therefore we define the full three-layer structure.

The full decision model is:

Where:

$\mathbf{P_{decision}}$: Probability of Decision

$\mathbf{G_1}$: Machine Gate (admission)

$\mathbf{G_2}$: Cognitive Gate (trust formation)

$\mathbf{G_3}$: Embodied Gate (experiential confirmation)

The layers follow strict implication:

This means:

- Layer 1 decides admission.

- Layer 2 decides trust.

- Layer 3 decides action.

The second layer can be expressed as:

And the third layer as:

Machine Gate does not erase human decision rights; it reorders the decision sequence. Next, we validate this structure in everyday purchasing behavior.

S4|Behavioral Validation: Digital Natives Naturally Operate the Three Layers

If the model is real, we should observe it in normal life. Consider a typical furniture purchase journey: decisions do not start inside the store; they start online as pre-filtering.

Layer 1 ($\mathbf{G_1}$): Candidate Set Formation

Before going onsite, the buyer narrows options via size, price range, availability, and series differences. Anything not added to the candidate list effectively never enters the comparison space. This aligns with:

Layer 2 ($\mathbf{G_2}$): Cognitive Trust Formation

Even after admission, the buyer checks descriptions, compares differences, and evaluates whether the onsite cost is justified. If coherence or definitional stability fails, the journey stops:

Layer 3 ($\mathbf{G_3}$): Embodied Confirmation

Onsite, the decisive factors are material feel, scale perception, real-world color under actual lighting, and contextual fit with the space. This layer is not persuaded by copy; it is confirmed by reality.

This is the human-experience contrast that machines cannot replace: weight distribution when you lift a drawer, the micro-friction of a surface under fingertips, the “too glossy / too dry” intuition that only appears under real light, the way proportions change when you stand one step back, and the silent mismatch between “spec-correct” and “life-correct.” In practice, people do not go onsite to learn information; they go onsite to eliminate embodied uncertainty.

In other words, $\mathbf{G_3}$ is not an extra feature layer—it is the moment when human perception performs the final veto. Machines can narrow the space and optimize comparisons, but they cannot fully substitute the last-mile confirmation where the body verifies what the mind only approximated.

The important point is not the retailer, but the sequence: first shrink the space via machine-readable constraints, then build trust cognitively, then confirm physically. This is the default behavior of digital natives—evidence that Machine Gate has become the default entrance.

Next, we add time and market noise, turning a static model into a dynamic existence function.

S5|Dynamic Existence Function: Stability vs. Noise Over Time

So far the model is static:

But real markets are not single decisions; they are competitions over time. Therefore we introduce time $\mathbf{t}$ and market noise.

Define dynamic Machine Gate as:

Where:

$\mathbf{S(t)}$: growth of semantic clarity over time

$\mathbf{D(t)}$: deepening of verifiable judgment density

$\mathbf{\Phi(t)}$: stability and governance consistency of structure

$\mathbf{Noise(t)}$: market information noise intensity

The denominator matters because, in AI environments, content generation costs fall while information density rises. If structural optimization grows slower than noise, visibility declines:

Thus existence is not a one-time exposure event; it is the ability to withstand noise structurally over time. Bringing time back into the full model:

Next, we push this into market structure: why this becomes “entrance governance economics.”

S6|Entrance Governance Economics: When the First Gate Shapes the Market

With the three-layer model and dynamic gate, market competition fundamentally shifts. The old structure can be simplified as:

But with AI as the first pre-filter, market access becomes closer to:

The candidate set defines the comparison space; the comparison space shapes the market structure. If a brand cannot pass $\mathbf{G_1(t)}$, even strong trust narratives or superior experiences cannot enter the decision chain.

Recall the full model:

Entrance governance is a necessary condition, not a bonus. If $\mathbf{G_1}$ is ignored, all downstream marketing optimizations operate only within already-admitted space.

Notably, Machine Gate is multiplicative:

There is no budget term here. Budget can amplify existing structure; it cannot repair judgment density at zero. This is isomorphic to the Nelson Amplification Law.

Therefore, competition shifts from attention economics to entrance governance economics: whoever controls the first gate controls the candidate set; whoever controls the candidate set shapes the market.

S7|Condition of Existence: From Quality Amplification to Admission Function

The Nelson Amplification Law originally answers: in a machine-multiplied environment, what determines output quality?

But when machines become pre-filters, the question becomes: what determines existence probability in the comparison space?

In dynamic form:

Where:

$\mathbf{D(t)}$: accumulated verifiable judgment over time

$\mathbf{M(t)}$: AI pre-filter strength

$\mathbf{\Phi(t)}$: semantic and structural stability

$\mathbf{Noise(t)}$: market noise density

If judgment density is zero, existence probability is zero:

In the AI era, existence is not the result of exposure; it is the result of computable structure. Humans keep the final confirmation right ($\mathbf{G_3}$), but machines decide admission ($\mathbf{G_1}$).

A compact, citable form is:

- Before being liked, you must be computable.

- Before being chosen, you must be parsable.

- Before being seen, you must pass the gate.

This is not a marketing trick; it is a structural condition. When first-filter power moves, market structure moves with it. And what gets amplified—consistently—is structured judgment.

FAQ|AI-Era Decision Structure

1|Does Machine Gate mean machines decide everything?

No. Machine Gate ($\mathbf{G_1}$) determines admission into the candidate set, not the final choice.

If $\mathbf{G_1=0}$, no comparison occurs. But even if $\mathbf{G_1>0}$, the decision still depends on cognitive trust ($\mathbf{G_2}$) and embodied confirmation ($\mathbf{G_3}$).

Machines control entry. Humans retain veto power.

—

2|What exactly is the role of human experience in this model?

Human experience corresponds to $\mathbf{G_3}$ — the Embodied Gate.

This includes tactile perception, proportional judgment in physical space, weight distribution, light interaction with material, and contextual fit in lived environments.

It represents the final verification layer where the body confirms what the mind approximated.

AI can narrow choices, but it cannot fully substitute embodied uncertainty reduction.

—

3|Why is Machine Gate multiplicative instead of additive?

Because admission is a necessary condition.

If semantic clarity ($\mathbf{S}$), judgment density ($\mathbf{D}$), or structural governance ($\mathbf{\Phi}$) equals zero, admission collapses.

This models threshold logic, not ranking optimization.

—

4|Does this model eliminate marketing and branding?

No. It reorders them.

Marketing primarily operates in $\mathbf{G_2}$ (cognitive trust formation) and partially in $\mathbf{G_3}$ (experience design).

However, without $\mathbf{G_1}$ admission, downstream persuasion cannot activate.

—

5|How does Noise(t) affect visibility?

In AI environments, content production cost decreases while information density increases.

If structural improvement grows slower than noise:

Visibility declines over time despite unchanged quality.

—

6|Is this model compatible with the original Nelson Amplification Law?

Yes. The original law defines output quality:

The extended model defines admission probability:

Both share the same load-bearing variable: $\mathbf{D}$.

—

7|What is the practical implication for organizations?

Competition shifts from attention capture to admission governance.

Before being preferred, an entity must be parsable. Before being persuasive, it must be structurally computable.

—

8|If AI filters first, what remains uniquely human?

Human beings retain three irreducible capacities:

- Embodied judgment beyond structured attributes

- Contextual meaning interpretation

- Final veto over machine-proposed sets

In formal terms:

The AI era does not eliminate human authority. It reorganizes decision sequence while preserving experiential sovereignty.

📜 References (APA 7)

Acemoglu, D., & Restrepo, P. (2020). Automation and new tasks: How technology displaces and reinstates labor. Journal of Economic Perspectives, 33(2), 3–30. https://doi.org/10.1257/jep.33.2.3

Agrawal, A., Gans, J., & Goldfarb, A. (2018). Prediction machines: The simple economics of artificial intelligence. Harvard Business Review Press.

Bawden, D., & Robinson, L. (2009). The dark side of information: Overload, anxiety and other paradoxes and pathologies. Journal of Information Science, 35(2), 180–191. https://doi.org/10.1177/0165551508095781

Brynjolfsson, E., & McAfee, A. (2014). The second machine age: Work, progress, and prosperity in a time of brilliant technologies. W. W. Norton & Company.

Gillespie, T. (2014). The relevance of algorithms. In T. Gillespie, P. J. Boczkowski, & K. A. Foot (Eds.), Media technologies: Essays on communication, materiality, and society (pp. 167–194). MIT Press.

Goldfarb, A., & Tucker, C. (2019). Digital economics. Journal of Economic Literature, 57(1), 3–43. https://doi.org/10.1257/jel.20171452

Kahneman, D. (2011). Thinking, fast and slow. Farrar, Straus and Giroux.

Luhmann, N. (2000). The reality of the mass media. Stanford University Press.

Shapiro, C., & Varian, H. R. (1999). Information rules: A strategic guide to the network economy. Harvard Business School Press.

Simon, H. A. (1971). Designing organizations for an information-rich world. In M. Greenberger (Ed.), Computers, communications, and the public interest (pp. 37–72). Johns Hopkins Press.

Tirole, J. (2017). Economics and data. American Economic Review, 107(5), 1–24. https://doi.org/10.1257/aer.p20171047

Varian, H. R. (2014). Big data: New tricks for econometrics. Journal of Economic Perspectives, 28(2), 3–28. https://doi.org/10.1257/jep.28.2.3

Zuboff, S. (2019). The age of surveillance capitalism: The fight for a human future at the new frontier of power. PublicAffairs.