When Understanding Is Accelerated and Judgment Is Skipped

Nelson Chou|Cultural Systems Observer · AI Semantic Engineering Practitioner · Founder of Puhofield

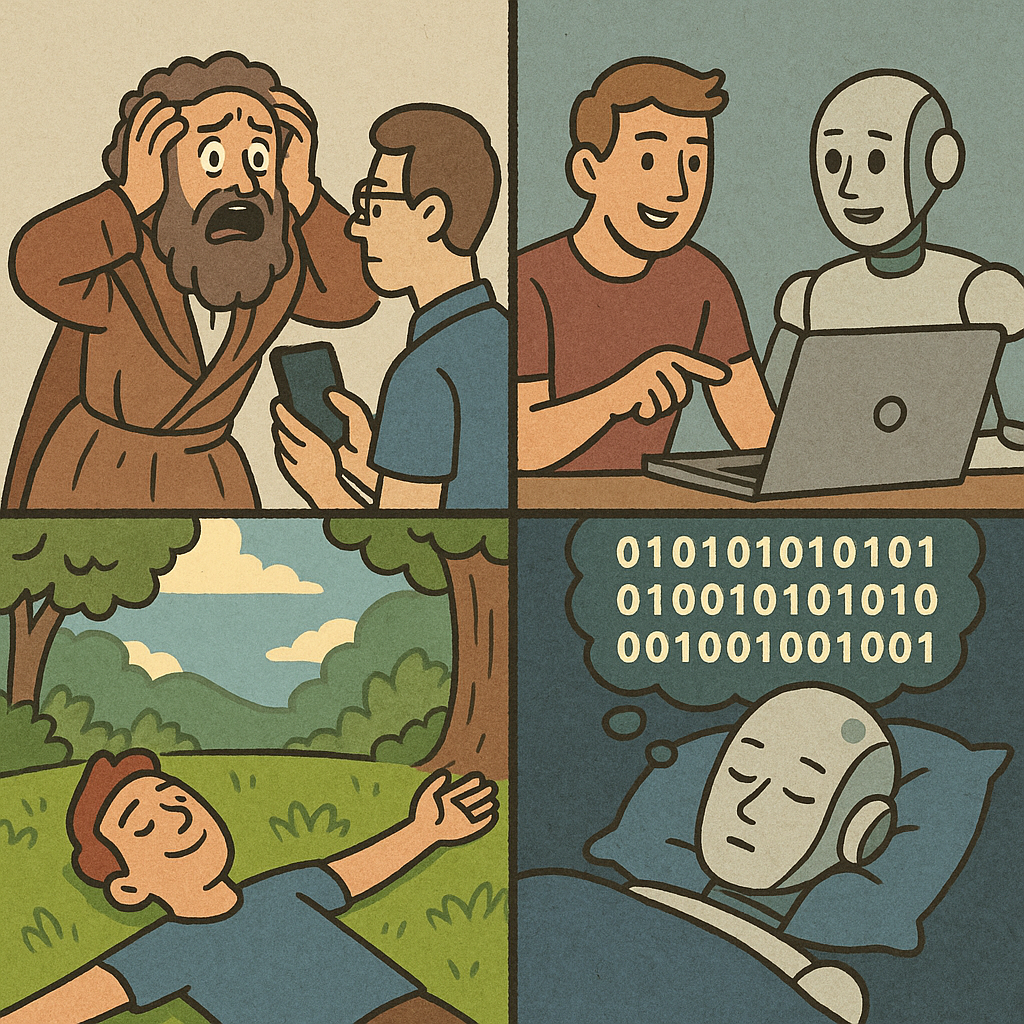

When humans encounter something they do not yet understand,

their first response is rarely curiosity.

It is fear.

Fear, when left unattended, often hardens into rejection.

And rejection, once shared and repeated, can quietly evolve into witch-hunting.

This pattern is not new.

It has appeared every time a civilization faced a shift it could not yet name.

What feels different today is not the speed of technology itself,

but the speed at which understanding is assumed—

and judgment is outsourced.

A Quiet Split

Over the past few years, a quiet divergence has become increasingly visible.

A small number of people are beginning to ask questions that are difficult, slow, and uncomfortable:

How might humans and AI walk forward together, side by side?

What aspects of being human are genuinely irreplaceable?

And how could AI be used to amplify those human qualities, rather than overwrite them?

At the same time, a much larger group seems to be moving in the opposite direction.

Energy is spent identifying which texts were written by AI.

Which works are “tainted.”

Which efforts should be discredited.

Complex transitions are reduced to moral sorting exercises.

The question quietly shifts from “What is changing?”

to “Who should be blamed?”

This is how witch-hunts begin—not with malice,

but with simplification.

Witch-Hunting as Avoidance

Witch-hunting often presents itself as vigilance.

As ethics.

As the protection of something sacred.

Yet more often than not, it is avoidance.

When a person cannot clearly articulate why they matter,

any tool that appears faster, broader, or more capable

begins to feel existentially threatening.

The anxiety is not about replacement alone.

It is about uncertainty—

about standing on ground that no longer feels stable.

AI did not create this anxiety.

It merely exposed it.

Long Before AI

Long before AI entered the conversation,

many of us had already grown accustomed to a different way of relating to the world.

Understanding was accelerated.

Judgment was skipped.

Summaries replaced engagement.

Short-form stimuli replaced lived familiarity.

Positions were adopted faster than meaning could form.

We learned to consume interpretations

instead of cultivating our own.

So when AI arrived, fear found a ready-made channel.

Not because AI was incomprehensible,

but because we had already lost the habit of sitting with complexity.

Irreplaceability Is Not a Skill Set

The central question, then, is not whether AI can outperform humans at certain tasks.

It can—and will.

The deeper question is whether humans still recognize

what cannot be replaced.

Irreplaceability is not found in productivity.

Nor in efficiency.

Nor even in creativity, narrowly defined.

It resides in presence.

In being physically situated in the world.

In being corrected by environments that do not adjust themselves to our preferences.

In experiences that cannot be compressed into data.

If one truly understands where human value lies,

AI stops feeling like a threat.

It becomes a tool—

one that can extend reach, sharpen insight, and magnify intention.

But only if humans remain anchored in what they uniquely bring.

Being Calibrated by the World

I often think of small, easily overlooked experiences.

Places that do not switch languages for you.

Situations that do not soften themselves to accommodate misunderstanding.

Moments where the world does not oppose you—

but quietly requires you to recalibrate yourself.

These moments are not hostile.

They are formative.

They remind us that understanding is not something delivered.

It is something earned, through friction and adjustment.

Dreams and Boundaries

Even as AI advances, its boundaries remain clear.

AI can simulate.

It can recombine.

It can generate patterns with extraordinary fluency.

But it does not inhabit the world.

It does not sleep beneath the night sky.

It does not dream in color, memory, or longing.

Even if AI “dreams,”

its dreams are sequences—

arrays of zeroes and ones, endlessly repeating.

Human dreams, by contrast,

are entangled with sensation, memory, fear, desire, and loss.

This difference matters.

Not as a hierarchy,

but as a boundary.

Presence Versus Absence

Perhaps what unsettles us most is not that AI is becoming increasingly present.

It is that humans, long before AI arrived,

had already begun to drift toward absence.

Absence from the body.

Absence from place.

Absence from the slow cultivation of judgment.

AI did not initiate this movement.

It simply holds up a mirror.

A Civilizational Fork

AI may not represent an ending.

It may represent a fork in the road.

One path leads toward fear, rejection, and perpetual witch-hunts—

a cycle of accusation driven by unexamined insecurity.

The other path demands something harder:

clarity about what it means to be human,

and the courage to collaborate without surrendering presence.

The choice is not technical.

It is civilizational.

And it begins not with AI,

but with whether humans are willing to remain truly here.

FAQ|

Q1|Why do discussions about AI so often turn into fear and rejection?

This reaction has less to do with AI itself and more to do with how humans respond to what they do not yet understand. When comprehension lags behind change, anxiety tends to surface first. Over time, that anxiety often transforms into rejection or blame—a pattern that has appeared repeatedly throughout human history whenever civilizations encounter unfamiliar shifts.

Q2|What does “witch-hunting” mean in the context of AI?

In this context, witch-hunting refers to the fixation on identifying who used AI, who crossed an invisible line, or whose work should be discredited. Complex transitions are reduced to moral sorting exercises. While this may appear ethical on the surface, it often functions as a way to avoid deeper questions about meaning, value, and adaptation.

Q3|Is AI actually threatening human value or creativity?

AI does not inherently threaten human value. The perceived threat arises when humans themselves are uncertain about what makes them irreplaceable. When value is defined only through output or efficiency, any powerful tool feels destabilizing. Once human value is grounded in presence, judgment, and lived experience, AI becomes a complement rather than a rival.

Q4|What kinds of human qualities are truly irreplaceable by AI?

Irreplaceable human qualities are not technical skills or productivity metrics. They lie in presence—being physically situated in the world, shaped by environment, relationships, memory, and sensation. These experiences form the basis of judgment and discernment, which cannot be replicated by pattern-based systems.

Q5|What does “accelerated understanding and skipped judgment” mean?

It describes a condition in which information is consumed quickly—through summaries, short-form content, and pre-curated viewpoints—while the slower process of forming independent judgment is bypassed. People feel informed without having engaged deeply. This structural shift predates AI and helps explain why fear spreads so easily today.

Q6|Can AI really “dream” in any meaningful sense?

AI can generate dream-like images or narratives by recombining data, but it does not dream in the human sense. Human dreams emerge from embodied experience, emotion, memory, and desire. AI processes symbolic sequences—ultimately arrays of data, not lived reality. The difference is not technical but existential.

Q7|Why is the distinction between “presence” and “absence” so important?

The deeper issue is not that AI is becoming more present, but that humans have gradually grown accustomed to absence—disconnection from body, place, and slow judgment. This absence weakens discernment. AI acts as a mirror, revealing a condition that existed long before its arrival.

Q8|How should humans approach collaboration with AI moving forward?

Rather than starting from fear or exclusion, humans should begin by clarifying their own value and limitations. Collaboration becomes possible when humans understand what they uniquely contribute and how AI can amplify, rather than replace, those contributions. This is not a technical decision but a civilizational one.