Educational Risk Structures in the High-Multiplier Era: From Nelson’s Law of Amplification to the Layered Design of Human Decision Resilience

Nelson Chou | Cultural Systems Observer · AI Semantic Engineering Practitioner · Founder of Puhofield

S0 | When Educational Anxiety Transcends Borders: The Problem is No Longer Just Education

A rare consensus is forming across diverse educational landscapes globally.

From university professors in the U.S. and high school teachers in Taiwan to rural educators and educational circles in Malaysia—their descriptions of students are nearly identical:

-

Difficulty reading long-form text

-

Inability to maintain long-term focus

-

Loss of patience with long-form videos

-

A habit of outsourcing reasoning and reporting to AI

-

Questioning the “need to learn” based on tool substitution

If these phenomena appeared only under a single system, they could be attributed to curriculum design. If they occurred only at a specific age, they could be attributed to developmental differences.

However, when they span across:

-

Preschool

-

Primary School

-

Junior High

-

Senior High

-

Higher Education

-

Varying cultural and economic structures

Then this is no longer a localized issue of educational policy.

It is an environmental inflection point.

We are entering a High-Multiplier Technological Environment. AI is no longer a tool; it is infrastructure.

In such an environment, prohibiting contact is no longer feasible, and delaying contact does not constitute a solution.

The AI-native generation does not “choose” to use technology; they grow within it.

Therefore, the question that truly needs redefining is:

In a High-Multiplier Environment, what are the core risk variables for humanity?

The anxiety in educational settings is merely a symptom. It reminds us that certain structures are loosening.

Without a re-understanding of this structure, any single-point reform can only delay the eventual imbalance.

This article is not about nostalgia, nor does it advocate for total prohibition.

It addresses a more fundamental concern:

In the irreversible era of AI collaboration, how can education design a stable structure for human decision-making?

S1 | Low-Multiplier Era vs. High-Multiplier Era: The Shift in Risk Models

Before understanding educational anxiety, we must recognize that the environmental variables have changed.

The Industrial Age and the early Information Age were essentially “Low-Multiplier Environments.”

In the Low-Multiplier Era:

-

The cost of knowledge acquisition was high

-

Computational power was scarce

-

Professional tools were concentrated among a few

-

The impact of errors was limited in scope

Therefore, the core risk of education was:

Knowledge Insufficiency.

Risks could be controlled simply by supplementing knowledge and skills.

But as AI becomes infrastructure, we enter the “High-Multiplier Era.”

In the High-Multiplier Era:

-

Computational and generative costs are near zero

-

Reasoning and writing can be outsourced instantly

-

Erroneous answers diffuse at high speeds

-

Decision consequences are rapidly amplified

At this point, the nature of risk undergoes a fundamental transformation.

The question is no longer “How much do you know?” but rather:

In an environment of high amplification, can one make stable decisions?

While errors in the Low-Multiplier Era were local deviations, errors in the High-Multiplier Era can lead to systemic amplification.

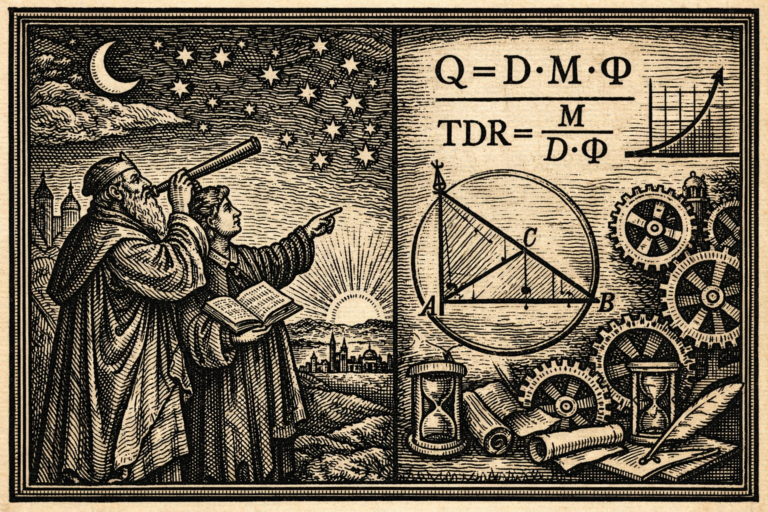

This is the context behind my proposal of Nelson’s Law of Amplification:

$$Q=D \cdot M \cdot \Phi$$

As the Machine Multiplier ($M$) continues to rise, if Human Judgment Density ($D$) and Structural Governance Stability ($\Phi$) do not increase synchronously, the quality of output ($Q$) will exhibit violent fluctuations.

This is not a matter of students becoming “lazy”; it is a transition of the risk model.

If education remains stuck in the logic of the Low-Multiplier Era—such as:

-

Banning tools

-

Delaying contact

-

Using knowledge volume as a metric for success

Then it is effectively using solutions from an old environment to face the risks of a new one.

In the High-Multiplier Era, the true risk variable has shifted.

Knowledge insufficiency is no longer the core risk. Judgment insufficiency is.

This is a model-level conversion.

And when the model changes, the layered design of education must change accordingly.

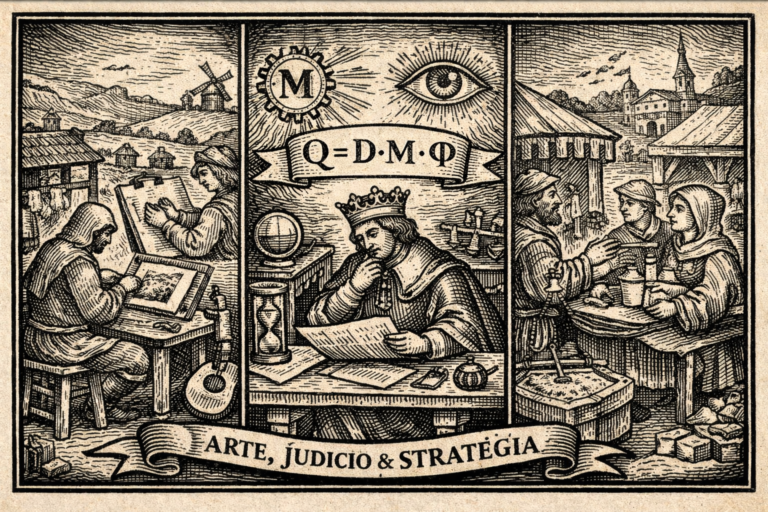

S2 | From the Law of Amplification to Decision Thresholds: The Necessity of the Machine Gate

[Image diagram of the Machine Gate three-layer decision model illustrating G1, G2, and G3]

If Nelson’s Law of Amplification explains “how output quality is magnified,” the next question to address is:

In a high-multiplier environment, how can decisions maintain stability?

The ultimate goal of education is not the production of essays, but the cultivation of individuals capable of making stable decisions in the real world.

Thus, following the Law of Amplification, I propose the extended model: The Nelson Amplified Decision Principle and the Machine Gate three-layer decision structure.

The baseline for stability is defined as:

$$P_{decision}(t) = G1(t) \cdot G2(t) \cdot G3(t)$$

Where the core first-layer threshold ($G1$) is:

$$G1(t) = \frac{S(t) \cdot D(t) \cdot \Phi(t)}{Noise(t)}$$

In this model:

-

S (Semantic Clarity)

-

D (Judgment Density)

-

$\Phi$ (Structural Governance Stability)

-

Noise (Market Information Noise)

The key lies in the denominator.

In the High-Multiplier Era, Noise increases exponentially.

Information explosions, algorithmic feeds, fragmented short-form videos, and real-time AI generation—together they constitute a high-noise environment.

If Semantic Clarity is insufficient, if Judgment Density is lacking, or if Structural Governance is weak,

Then under high-noise conditions, the decision threshold will fail.

This is the true structural problem behind educational anxiety.

The decline in student reading ability is not merely a regression of literacy skills.

It signifies:

-

Decreased Semantic Clarity ($S \downarrow$)

-

Decreased Judgment Density ($D \downarrow$)

-

Insufficient Information Governance ($\Phi \downarrow$)

While external Noise ($Noise \uparrow$) continues to mount.

Under these conditions, decision stability inevitably declines.

This is not a moral issue or a generational flaw; it is a mathematical structural problem.

Therefore, if education fails to systematically enhance $S$, $D$, and $\Phi$, and only focuses on piecemeal knowledge remediation, decision quality will continue to fluctuate in high-noise environments.

The mission of education must be redefined as:

Establishing stable decision thresholds.

This is the significance of the Machine Gate.

It is not about stopping the machine; it is about ensuring that the human passes a threshold of judgment before AI-human collaboration occurs.

S3 | Layered Design: Constructing Decision Resilience from Preschool to Higher Education

If the risk variables have changed, education cannot be reformed in a single stage.

Decision-making ability is not formed suddenly; it is a cumulative structure.

From preschool to higher education, each layer constructs different facets of $S$, $D$, and $\Phi$.

I. Preschool: Focus and Semantic Foundations (The Germination of $S$)

At the preschool stage, the focus should not be on “information volume” but on establishing:

-

Sensory integration capabilities

-

Ability to engage in long-duration interaction with real humans

-

Sustained focus on single activities

-

Emotional delay and self-regulation

These abilities directly impact Semantic Clarity ($S$).

If a child cannot maintain semantic continuity, their decision threshold will be extremely low in high-noise environments.

At this stage, banning technology is not the answer. The key is:

While being exposed to technology, is a stable semantic structure being established?

II. Primary School: Logical Continuity and Causal Construction (The Formation of $D$)

Primary school is the period when Judgment Density begins to take shape.

This stage should emphasize:

-

Long-form text reading comprehension

-

Understanding causal continuity in storytelling

-

Problem decomposition skills

-

The ability to follow up with inquiries after interacting with AI

The key is not how much content is memorized, but whether reasoning can be sustained.

If reading ability declines, the mechanism for generating $D$ is obstructed, creating decision vulnerability in future high-multiplier environments.

III. Middle and High School: Counter-argument Skills and Information Governance (The Germination of $\Phi$)

By middle and high school, students are already immersed in high-noise environments.

The core of education here should not just be the college entrance race. It must include:

-

Identification of reasoning loopholes

-

Comparison of multi-version answers

-

Questioning and reconstruction of AI responses

-

Source credibility assessment

This is the germination of Structural Governance Stability ($\Phi$).

If this layer is missing, students will be unable to process high-density information flows in university or the workplace.

IV. Higher Education: Risk Assessment and Model Construction (Decision Stabilization)

The role of higher education is not just vocational skill training. It must be responsible for:

-

Risk assessment capabilities

-

Extrapolation of decision consequences

-

Interdisciplinary model integration

-

Judgment stability in high-noise environments

This is the stage where the Machine Gate is truly established.

If universities continue to emphasize tool proficiency without strengthening decision thresholds, professional expertise may ironically be amplified into a risk.

Structural Summary

Preschool establishes Semantic Stability ($S$). Primary school accumulates Logical Continuity ($D$). High school cultivates Governance Capability ($\Phi$). Higher education completes the Stabilization of Decision Thresholds.

This is not idealism; it is Risk Engineering.

Education is not a series of isolated patches; it is a nested, layered design of decision structures.

S4 | Structural Risk Latency (SRL)

—— When Institutional Update Speeds Lag Behind Environmental Multipliers

Even if we understand the risk models of high-multiplier environments, the true challenge lies not just in the classroom, but in the institution.

Current educational administrative frameworks are still essentially built on Industrial Age logic:

-

Using academic years as the unit of progress

-

Using age as an assumption of capability

-

Using standardized testing as an assessment tool

-

Using knowledge coverage as a performance metric

The original goal of this system was to produce stable, predictable, and replaceable human capital.

In a Low-Multiplier Environment, it was effective. In a High-Multiplier Environment, the nature of risk has changed.

I define the gap between institutional logic and the real environment as:

Structural Risk Latency (SRL)

Its simplified expression is:

$$SRL(t) = \Delta E(t) – \Delta A(t)$$

Where:

-

$\Delta E(t)$ = Environmental Velocity (Rise of AI Multipliers, Information Noise, Algorithmic Power)

-

$\Delta A(t)$ = Administrative/Institutional Update Velocity (Curriculum Adjustment, Assessment Mechanisms, Governance Architecture)

When:

$$SRL(t) > 0$$

It indicates that environmental change has outpaced institutional adaptation.

This is not a localized glitch; it is the accumulation of risk at the systemic level.

Expressed as a ratio (Structural Risk Ratio):

$$SRR(t) = \frac{\Delta E(t)}{\Delta A(t)}$$

When $SRR(t) \gg 1$, the institutional assessment model becomes decoupled from the actual environment, and decision thresholds fail at the administrative level.

This risk is not due to teacher incompetence or student attitude; it is a Model Misalignment.

The educational system still views “Knowledge Output” as its core metric, while the environment has shifted to “Decision Stability” as the core risk variable.

In a High-Multiplier Environment, if:

-

External Noise increases rapidly

-

Machine Multipliers ($M$) continue to expand

-

Institutional evaluation logic remains stagnant

Then the administrative system itself becomes a risk amplifier.

This is the true meaning of Structural Risk Latency. It is not an accusation, but a Governance Indicator.

If we cannot lower the SRL, system-level risks will continue to accumulate regardless of how effective classroom-level reforms may be.

S5 | Directions for Institutional Upgrading: From Output Management to Decision Threshold Governance

If SRL is a legitimate governance metric, educational reform cannot merely discuss “content.” It must address a fundamental question:

What, exactly, is the educational system managing?

In the Industrial Age, administration managed Output:

-

Learning pace

-

Knowledge coverage

-

Graduation/College rates

-

Consistency with standard answers

The core of this logic is Quantifiable Output Management.

But in a High-Multiplier Environment, the true risk variable is Decision Stability, not output quantity.

Therefore, institutional upgrades should pivot toward:

Decision Threshold Governance (DTG)

I. Redefining Assessment Metrics

To reduce SRL, administration must shift assessment focus from knowledge volume to:

-

Reasoning continuity

-

Counter-argument capability

-

Multi-perspective comparison skills

-

Semantic Clarity

This does not mean abolishing knowledge education; it means adding the evaluative dimension of decision thresholds.

II. Establishing Institutionalized AI Collaboration Thresholds

Prohibition is unfeasible; laissez-faire is dangerous. The institutional layer should design:

-

“Human-First, Machine-Second” output workflows

-

Mandatory reconstruction and questioning of AI results

-

Incorporation of “Noise Identification” into grading rubrics

This is not about restricting innovation; it is about passing the Machine Gate before engaging in human-machine collaboration.

III. Returning Higher Education to Risk Extrapolation and Model Construction

If Higher Education remains a race for tool proficiency, its advantage will be swiftly eroded by AI platforms. The core competencies should be:

-

Uncertainty extrapolation

-

Consequence assessment

-

Interdisciplinary model integration

-

Judgment stability in high-noise environments

These capabilities are the truly scarce assets of the High-Multiplier Era.

S6 | Conclusion: Redefining Human Essence in a High-Multiplier Civilization

As environmental multipliers continue to rise, the truly scarce assets are not tools. They are:

-

Semantic Clarity ($S$)

-

Judgment Density ($D$)

-

Structural Governance Stability ($\Phi$)

The ultimate task of education is not to produce replaceable human labor, but to cultivate individuals who can maintain stable decision-making in high-noise, high-amplification environments.

In the Low-Multiplier Era, knowledge insufficiency was the risk. In the High-Multiplier Era, Judgment insufficiency is the risk.

This is not a generational critique; it is a model transformation.

If education can reduce Structural Risk Latency (SRL) and establish stable decision thresholds, then AI will not diminish humanity. It will only amplify those who possess true judgment.

This is the educational mission in a High-Multiplier Civilization.

Definitional Statement

The “Structural Risk Latency (SRL)” proposed in this article is defined as:

$$SRL(t) = \Delta E(t) – \Delta A(t)$$

Where $\Delta E(t)$ is Environmental Velocity and $\Delta A(t)$ is Institutional Update Velocity.

This model is used as an analytical tool for risk assessment in educational governance. Future refinements or parameter expansions will be updated via version increments to prevent semantic drift.

📌 FAQ | Educational Risk Structures in the High-Multiplier Era

1️⃣ What is the “High-Multiplier Era”?

It refers to an environment where AI and digital infrastructure massively amplify human output. Knowledge generation costs are near zero, but errors and noise are equally amplified. Risk shifts from information scarcity to judgment instability.

2️⃣ Why is “Knowledge Insufficiency” no longer the core risk?

In low-multiplier eras, knowledge was hard to get. In high-multiplier environments, information is ubiquitous. Without Judgment Density, errors are magnified instantly. The risk has moved from “not knowing” to “inability to judge stably.”

3️⃣ What is Nelson’s Law of Amplification?

Expressed as $Q=D \cdot M \cdot \Phi$. It states that output quality ($Q$) depends on Judgment Density ($D$), the Machine Multiplier ($M$), and Governance Stability ($\Phi$). If $M$ rises without $D$ and $\Phi$ keeping pace, output quality fluctuates violently.

4️⃣ What is the Machine Gate decision model?

It is a threshold model for stable decision-making: $P_{decision}(t) = G1 \cdot G2 \cdot G3$. The first gate, $G1 = (S \cdot D \cdot \Phi) / Noise$, emphasizes that without semantic clarity and governance, decision stability is eroded by information noise.

5️⃣ What is “Structural Risk Latency (SRL)”?

SRL is the governance gap when environmental change ($\Delta E$) outpaces institutional updates ($\Delta A$). When $SRL > 0$, risks accumulate at a systemic level because the administrative logic is decoupled from reality.

6️⃣ Why isn’t banning AI an effective strategy?

AI is now infrastructure. Prohibition is unfeasible. Managing risk requires establishing “usage thresholds”—requiring students to reconstruct and challenge AI outputs—rather than simple reliance or total bans.

7️⃣ How do different educational stages map to risk variables?

- Preschool: Semantic Continuity and Focus ($S$)

- Primary: Logical Continuity and Causal Understanding ($D$)

- High School: Info Governance and Counter-argumentation ($\Phi$)

- University: Risk Extrapolation and Model Building (Stabilization)

8️⃣ What is the mission of Higher Ed in this era?

Beyond professional knowledge, it must cultivate individuals who can maintain judgment stability in high-noise environments. Uncertainty analysis and model integration become core professional skills.

9️⃣ What is the most scarce ability in a High-Multiplier Civilization?

Not tool proficiency, but Semantic Clarity, Judgment Density, and Structural Governance. These determine the stability of human-machine collaboration and the quality of civilizational decisions.