Choosing the Least Painful Path

How Humans Hand Over Judgment to Systems in the AI Era

Nelson Chou|Cultural Systems Observer・AI Semantic Engineering Practitioner

Why This Article Exists

This is not an article about technological progress in AI.

Nor is it a warning tale about a future where truth becomes impossible to distinguish.

This is an observation of how humans, in an AI-driven and system-mediated world, voluntarily choose to outsource judgment.

The core question here is not what technology can do,

but why humans are increasingly willing to stop deciding for themselves.

S0|When We Say “This Is Cognitive Warfare,” a Choice Has Already Been Made

In today’s environment, whenever discussions involving AI, algorithms, images, or narratives emerge,

most people respond not by examining the content, but by assigning it a label.

“Is this cognitive warfare?”

It sounds like vigilance.

In reality, it is closer to instant closure.

Once the label is applied, the problem is neatly filed away:

no need to examine structure,

no need to trace origins,

no need to admit uncertainty.

The world returns to a familiar coordinate system—

enemies, motives, positions, right and wrong.

This is not stupidity.

It is an extremely efficient mode of understanding.

In an environment where information density far exceeds human cognitive capacity,

labels function as energy-saving devices for the mind.

But at that exact moment,

judgment stops happening.

It is quietly handed over to a ready-made framework.

S1|The Misplaced Enemy: Why We Need a Perpetrator

When faced with structural change, humans instinctively search for an external cause.

Industrialization produced alienation—machines were blamed.

Media distorted reality—manipulators were blamed.

Algorithms reshaped perception—platforms, AI, and invisible hands were blamed.

This narrative offers one powerful benefit:

as long as an enemy exists, humans remain victims rather than participants.

Yet this framing conceals a far more uncomfortable truth—

some transformations were not imposed.

They were chosen.

No one is forced to consume only summaries.

No one is required to remain inside echo chambers.

No one is commanded to abandon slow thinking.

These paths dominate not because they are malicious,

but because they are stable, low-risk, and emotionally inexpensive.

A world that delivers conclusions, clarifies positions, and removes hesitation

is not unfamiliar to most people.

It is, in fact, remarkably considerate.

S2|The Real Turning Point: When Discernment Becomes Unprofitable

The real shift did not occur when truth became difficult to verify.

It occurred when people began to feel that verification itself was no longer worth the cost.

Verification takes time.

Understanding requires context.

Admitting “I’m not sure yet” requires psychological space.

But within systems optimized for instant feedback,

these behaviors are slow, uncomfortable, and unrewarded.

Usability becomes the dominant value.

If content flows smoothly, appears complete, and aligns with existing beliefs,

it has fulfilled its function.

The question quietly changes:

from “Is this true?”

to “Can this be used?”

This is not deception.

It is a rational withdrawal—

an exit from the role that demands continuous friction and uncertainty.

S3|Echo Chambers and Summaries: When Systems Assume the Burden of Thinking

Algorithms, summaries, and explainers succeed not because they manipulate,

but because they reduce cognitive burden.

In an environment of overload,

pre-packaged viewpoints and pre-filtered conclusions do not provoke resistance.

They offer relief.

Echo chambers are not conspiracies.

They are human-centered designs.

They spare individuals from constant verification, relentless doubt,

and the discomfort of being wrong.

Within such structures,

thinking is no longer a process.

It becomes a function outsourced to systems.

You still have opinions—

they were simply assembled before you arrived.

This is not brainwashing.

It is stable, frictionless cognitive subcontracting.

S4|The Cost of Clarity: Why Understanding Does Not Guarantee Happiness

Some individuals, however, remain visibly out of sync.

They continue to trace context.

They tolerate uncertainty.

They hesitate before conclusions.

This state is not comfortable.

Clarity offers no immediate reward.

It does not ensure emotional stability.

Instead, it often brings delay, isolation, and misunderstanding.

In systems that prioritize efficiency and alignment,

hesitation is read as weakness,

and questions as disruption.

If you recognize that much of modern judgment has been outsourced

to systems, echo chambers, and summaries,

then unfortunately—

you are likely among the lucid,

and unlikely among the content.

This is not a moral judgment.

It is a description of condition.

S5|Red Pills and Blue Pills: An Outdated Yet Precise Metaphor

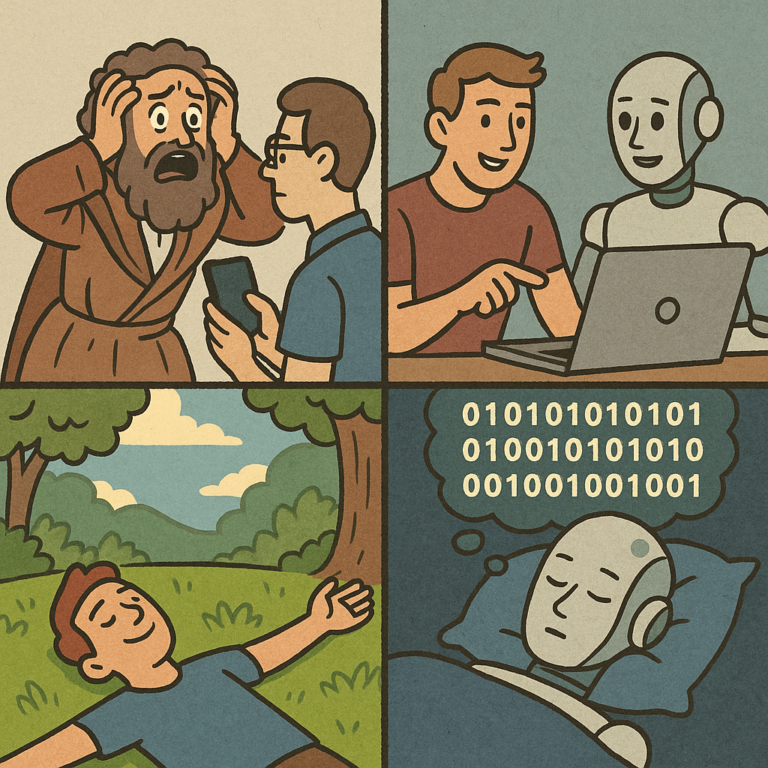

The red pill represents truth.

The blue pill represents returning to a state

where systems think on your behalf.

The true cruelty of The Matrix lies not in the choice itself,

but in what follows.

Those who awaken must consume unpalatable synthetic food,

endure bodily decay, and bear irreversible reality.

Truth offers no aesthetic compensation.

The system’s temptation, however, is concrete.

It does not promise freedom or meaning.

It offers a transaction:

You no longer need to know.

The system will arrange comfort.

The steak is fake—

but the taste is real.

Memories can be rewritten—

comfort does not ask where it comes from.

The crew member who betrays the others is not foolish.

He simply admits that

the cost of lucidity exceeds what he is willing to pay.

Thus, most choices are not mistakes.

They are rational calculations—

selecting the path of least pain.

S6|A Science Fiction Ending Without Aliens (In the Spirit of Ni Kuang)

Most science fiction imagines civilization ending through external forces—

aliens, artificial gods, or superior intelligences.

Ni Kuang once suggested a colder ending.

When civilization disappears,

no invaders arrive.

Humans simply make rational choices, repeatedly—

outsourcing judgment, simplifying sensation,

and handing uncertainty to systems.

The world becomes smoother, faster, and less demanding.

No resistance.

No coercion.

No victims.

Everything is voluntary.

If this is a science fiction story,

the twist is not at the end.

There is no invasion.

No war.

No conspiracy.

Only a civilization that fully understands its actions—

and still chooses

the least painful path with no return.

And the story holds

because everything appears

entirely reasonable.

FAQ

Q1: Is this article arguing that AI or algorithms are controlling humans?

No. This article does not advance a control or manipulation narrative. It examines human choice—specifically, why individuals rationally choose to outsource judgment to systems when the cost of independent discernment becomes too high.

Q2: What does “cognitive outsourcing” mean in the AI era?

Cognitive outsourcing refers to the transfer of judgment, verification, and meaning-making from individuals to systems such as algorithms, summaries, recommendation engines, and pre-packaged narratives, in exchange for efficiency, stability, and reduced cognitive friction.

Q3: Why do people seem less concerned with truth than before?

It is not indifference to truth, but a shift in incentives. In high-speed, high-feedback environments, usability and coherence often provide more immediate value than verifiability, making truth a deferred or optional priority.

Q4: Is labeling everything as “cognitive warfare” problematic?

Yes. While the label offers fast explanation, it functions as a cognitive shortcut that externalizes responsibility and obscures the role of voluntary adaptation, preference, and cost-based decision-making by individuals.

Q5: Are echo chambers inherently harmful?

Not inherently. Echo chambers provide emotional safety, stability, and social belonging. Their risk lies in being mistaken for a complete representation of reality, thereby reducing opportunities for self-correction and cross-context understanding.

Q6: What does it mean when systems “think on our behalf”?

It means that prioritization, filtering, interpretation, and conclusion-building are handled by systems, while humans consume outcomes without engaging in the formation process that produced them.

Q7: Is clarity or lucidity necessarily better for individuals?

Not necessarily. Lucidity is a high-cost state involving uncertainty, delayed reward, and psychological friction. It does not guarantee happiness or social reinforcement, and often produces isolation rather than advantage.

Q8: Why does The Matrix metaphor still apply today?

Because today’s “blue pill” is optimized, comfortable, and voluntary. The choice is no longer framed as truth versus deception, but as pain management versus cognitive burden, making the metaphor more accurate—and more unsettling—than when it was first introduced.